This 5-second clip took just 10 minutes to create—but it points to a larger shift in visual storytelling. Where we once relied on slow pans across still images to evoke feeling, today’s generative tools let us add subtle motion, depth, and atmosphere that guide the eye and enhance meaning. It’s not just about making things move—it’s about making stories resonate, faster and with more intention.

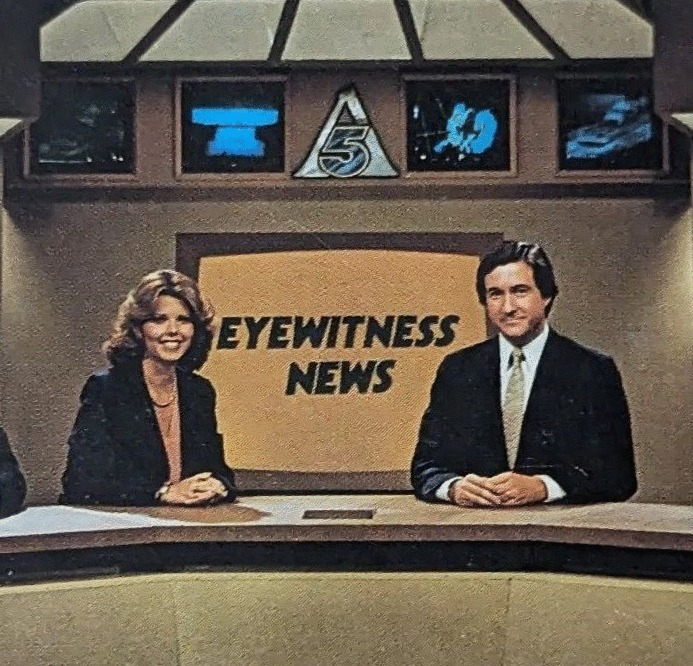

As a studio cameraman in the early 1980s at WAGA-TV in Atlanta, I spent countless hours manually pushing, pulling, and tilting cameras to bring life to static 2D artwork. This was a decade before Ken Burns’ landmark 1990s documentary popularized the slow pan-and-zoom effect on still images.

Later, the introduction of the Ampex ADO in 1985 allowed us to create those moves electronically — a major leap forward. With the rise of non-linear editors like Final Cut Pro, After Effects, and Premiere Pro, we gained even more control over these techniques.

Today, I’m exploring a new evolution: combining AI tools with traditional editing skills to go beyond the Ken Burns effect.